CNO Validation Framework

Information is increasingly becoming the basis of important business decisions. But this only works if the underlying data is correct and complete. Otherwise threatens the well-known “garbage in – garbage out”. As a result, businesses need to pay more attention to data governance and data quality. CAD ‘N ORG provides with the Validation Framework a powerful tool for ensuring data consistency and quality in the PLM-System Teamcenter.

Goals

- Avoid inconsistent data at the time of creation

- Identification of incomplete or outdated information

- Faster process flows and better data quality in the PLM-System

Features

- Pre-validation by the user via wizards

- Integration in any application and existing workflow processes

- Easy definition of check rules and validation criteria within Teamcenter

- Check rules can be defined at individual and subassembly levels in different depths

- Analysis function to ensure data quality

- Validation of legacy data or migrated data

- Report functionality with connection to existing reporting solutions

- Cleanup functionality for faulty data

- Validation during data creation and directly in the design process

- Fully integrated with Teamcenter

Requirements

- Teamcenter (other PLM-Systems on demand)

- Linux and Windows Support

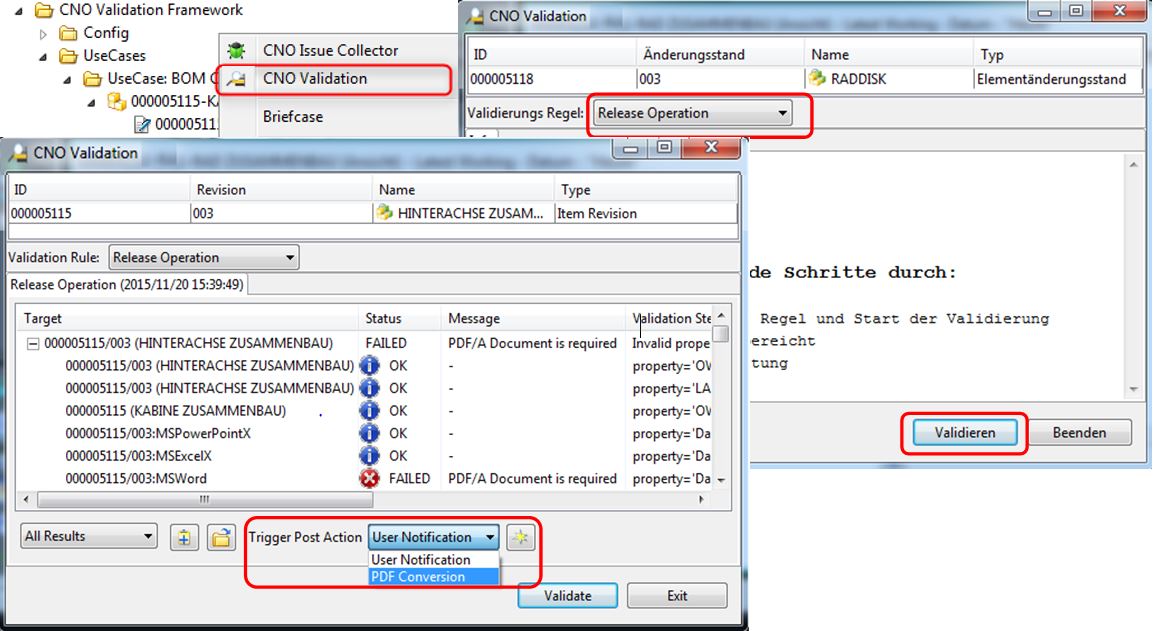

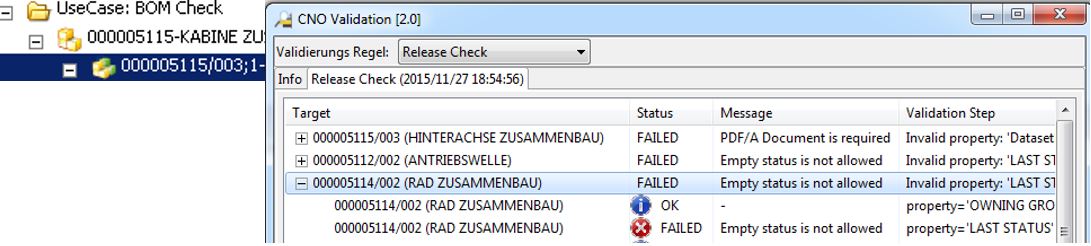

Sample: Teamcenter Rich Client Integration

Ensuring data and process quality through integrated wizards

Process reliability and excellent data quality via Validation Framework

Ad-Hoc Validation

- Preliminary validation by the user before initiating the approval process

- Start of follow-up processing in case of valid or invalid data

- Direct guided correction option

- Training of the users on internal quality requirements

Workflow Validation

- Integrate the validation checks in existing Workflows

- Recursive validation on assemblies using expansion and config rules

- Check in any process steps and lower the complexity of your workflows

Input Validation

- Avoiding the creation of invalid objects

- Notes to guide the users on correct filling of forms

- Detection of duplicates

- Integration into all PLM components (data model, cad, interfaces, …)

Reports

- Query engine for providing data to BI systems for further analysis and initiation of improvement actions

Mass data correction

- Cleanup of incorrectly or incompletely migrated data

- Identification and correction of incomplete legacy data

Configuration Assistent

- Easy creation of validation rules in Teamcenter

- Mapping of complex rules via Drag&Drop

- Direct access on the Teamcenter Datenmodel without a long training

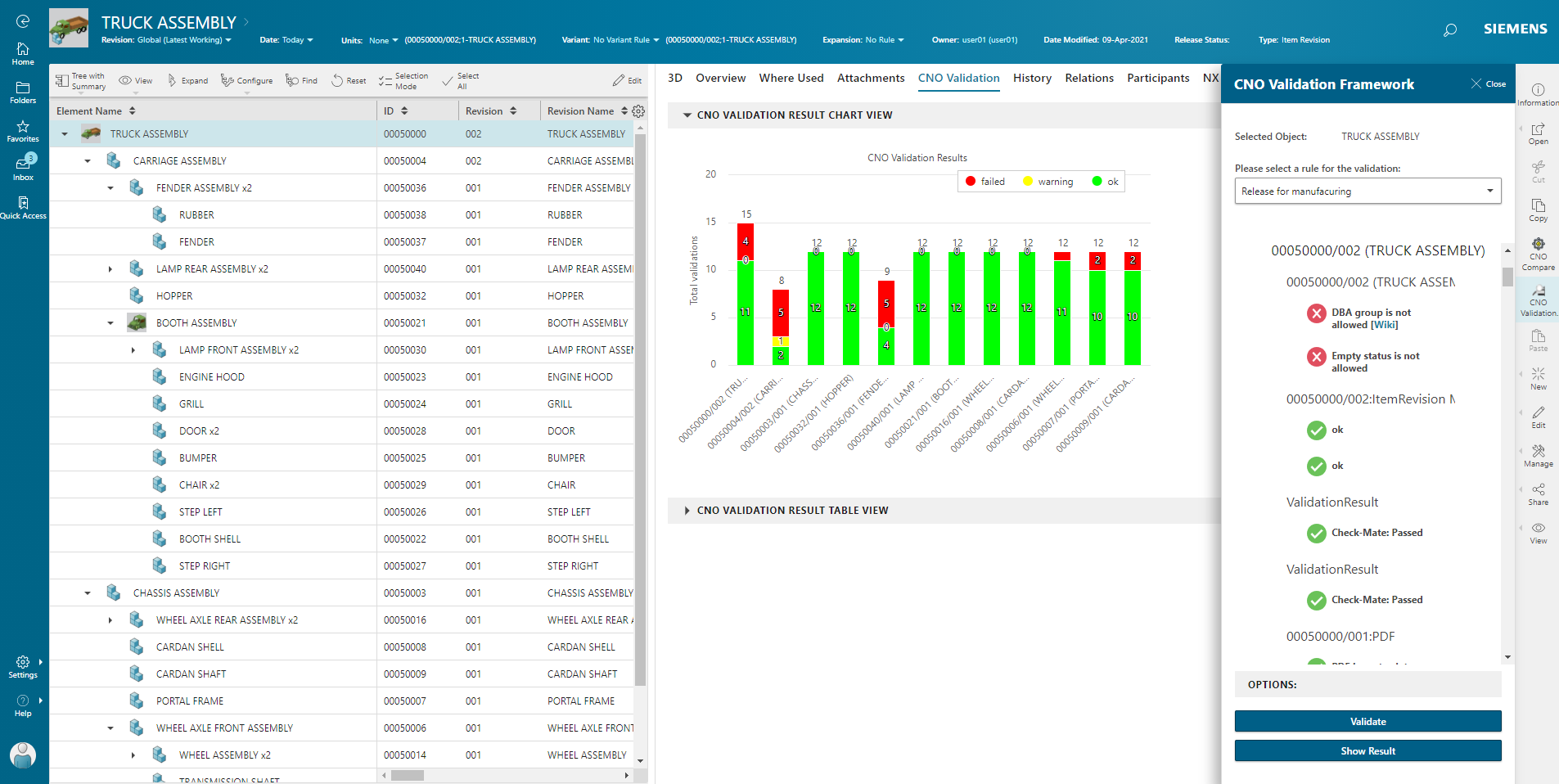

Sample: Active Workspace Integration

Ensuring data and process quality through deep integration in all modules and interfaces

Usage

The Validation Framework is easy-to-use and can be easily integrated in your existing PLM environment. Validation can be done ad-hoc by the user, e.g. as a pre-check in the client, automatically integrated in the release workflow or as proactive analysis in the database. The validation criteria are configurable and can be adapted on-the-fly to growing requirements.

Benefit

- Very high user acceptance through independent error corrections

- Valid data and efficient workflow processes

- Speedup work in Teamcenter through error prevention

Further advantages

- The validation solution ensures correct and consistent data in Teamcenter.

- Corrective actions are standardized and uniform. Aborts in subsequent processes such as production releases or data transfers to ERP are prevented.

- Risks of using invalid or outdated information in subsequent business processes are avoided.

- Ongoing development of the validation criteria leads to long-term efficient and error-free working in Teamcenter.

Requirements for professional data management in Teamcenter

Development processes are represented in Teamcenter by workflows. Data and models are generated, changed, released and subsequently transferred to ERP systems such as SAP for further use.

Efficient work thanks to excellent data quality

Efficient working in Teamcenter requires good “data quality”, this means the consequent usage of design standards as a prerequisite for error-free processes.

Effort for solving data errors in Teamcenter

The standard error messages by Teamcenter often give only a little indication of the origin and reason. Corrections usually have to be made by the IT help desk and are often complicated, time-consuming and repetitive.

Conclusion

Without the systematic improvement of the data quality by professional tools, the administrative effort for corrections increases continuously with more data, working in Teamcenter becomes more and more complex and confusing for the user.

Demo Active Workspace:

Please check the video to see our solution embedded in Active Workspace using the ad-hoc validation.

Demo Rich Client:

Please check the video to see our solution integrated in Teamcenter Rich Client using the ad-hoc validation.